Last year, following the BattleSnake event, I (Scott) wrote a blog post that went into the technical design and strategy of our Bounty Snake, the Redbrick Robosnake.

The post was well-received, and a few people asked me this year if there would be another one for our 2018 Bounty Snake, Son of Robosnake. Well, the wait is over, and the answer is yes!

This blog will follow the same format as last year’s post (we’ll use the same headings), but with a focus on specifically what has changed since last year. In other words, I’m not going to explain Minimax again, or talk about any part of the code that wasn’t changed, so go read last year’s post if you haven’t already! I’ll wait. ?

Prerequisites

Once again, we would need two things to get started: a game server to run some practice matches on, and some enemy snakes to practice against. And while there were plenty of tough enemy snakes from last year, open-sourced on GitHub that we could (in theory) practice against - the snake API changed for 2018, so the official game server would not support them.

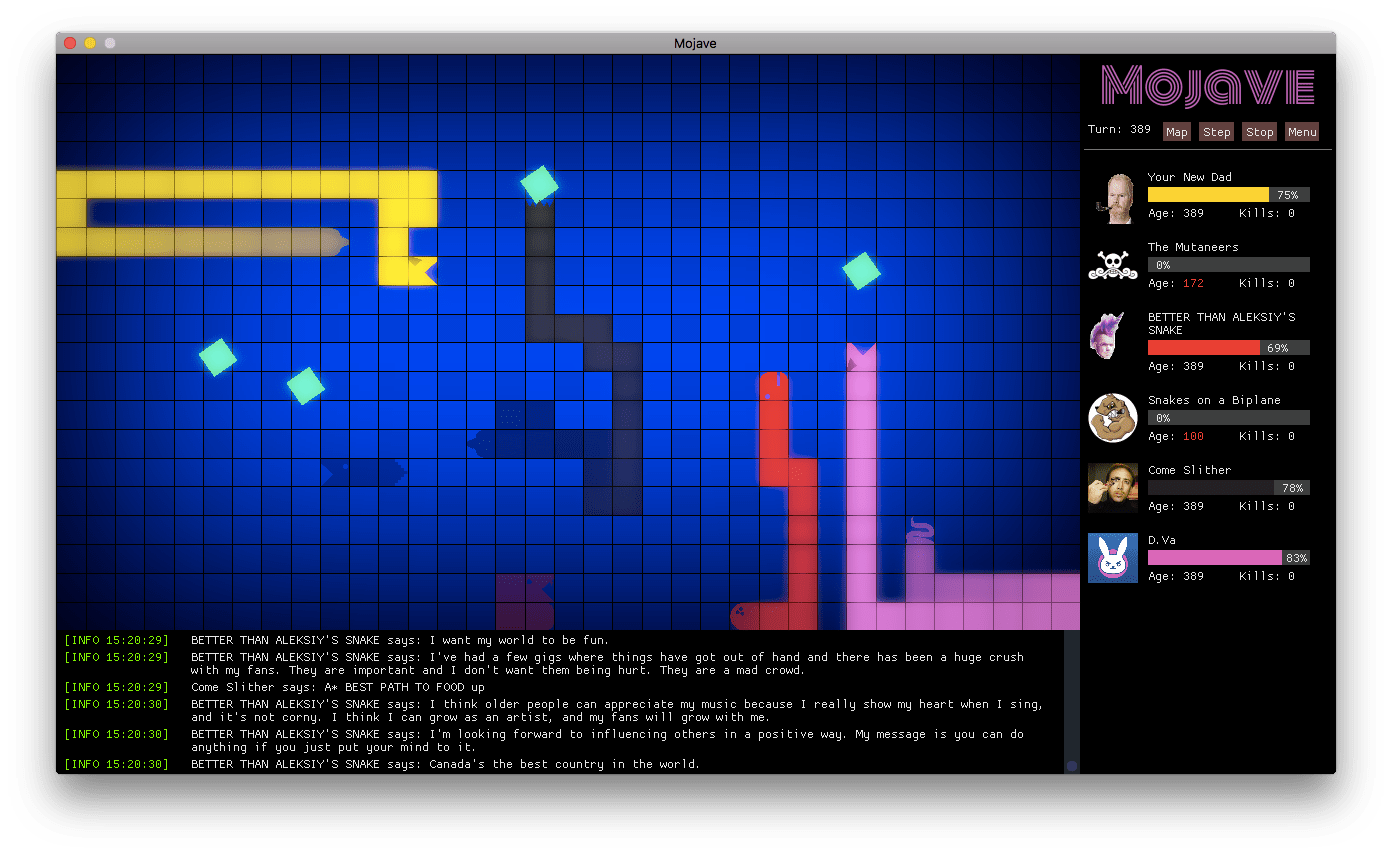

What to do? I ended up dusting off Mojave, the quick-and-dirty Battlesnake game server I wrote last year, modified it to speak the 2018 API, and added support for specifying the API version on a per-snake basis. So using Mojave, we could have 2018 snakes playing against 2017 and even 2016 snakes in the same game. I also prettied it up with better graphics and a better soundtrack.

A couple of the snakes that we used to test Robo this year included: Your New Dad - https://github.com/andreirtaylor/yournewdad - this was one of the three snakes that beat Robo last year. Better Than Aleksiy’s Snake - https://github.com/rdbrck/battlesnake-2017-btas - last year’s winner of the Expert division (and also a Redbrick snake). If you want to try out Mojave it can be downloaded here: https://github.com/smallsco/mojave - it’s a desktop application that runs on Windows, Mac OS, and Linux - note that this is not a Redbrick product, just a Scott project, and no support is offered for it.

Also, if you do use it, please test your snakes under the official game server as well, as there may be subtle differences in how things are handled internally! Oh, by the way - Mojave has both the original Robosnake, and Son of Robosnake built into its code, so you can play them without having to set up your own server.

Initial Design

While Robo performed very well last year, I had a few frustrations with it. First of all, Robo played too defensively. It would never move into a square that the enemy snake could also move into. This means that games could go on for a very long time, and Robo’s only path to victory was by trapping the enemy.

Secondly, Robo had terrible performance, taking just short of one second (on average) to calculate its next move. This delay also caused games to go on for a very long time and frustrated many of the competitors that wanted to play us. It also meant that we could not handle playing more than one or two games at once without timeouts.

Finally, because we disabled logging for production (for performance reasons), when Robo did lose a game, we had no way of finding out why. We wanted to come up with a way to log games and “play them back” at a later date, without a performance loss. This year, Tyler and Erika joined me on the Bounty Snake team to help make these improvements.

Algorithm

The core algorithm (the alpha-beta pruning variant of Minimax) did not change from the previous year. However, we corrected a major bug in the implementation: Minimax is fundamentally an algorithm for predicting the future of a turn-based game.

The game of BattleSnake, however, is not turn-based - that is, all snakes execute their moves simultaneously. So in the original design - it is possible for Robo to mispredict the game if we reach an endgame state (and start evaluating the board heuristic) before all snakes have completed their moves.

So how do we address this? The answer came to me after reading this paper (based on a Tron game AI): http://studentnet.cs.manchester.ac.uk/resources/library/3rd-year-projects/2014/adam.gill-3.pdf - if we’re maximizing (computing Robo’s move), no change. If we’re minimizing (computing the enemy’s move), rather than using the current state of the board, we start with the board state as it was prior to computing Robo’s move.

Finally, we only evaluate the board heuristic when the recursion depth is at an even value, meaning that both our move and the enemy’s move have been considered. This bug fix would be necessary in order to implement support for head-on-head collisions in the heuristic.

Game Logic

Another very important bug fix involved the tracking of snake tails. Robo incorrectly assumed that when a snake eats a piece of food, the snake would grow on the same turn, and the existing tail wouldn’t be removed on that turn. This is incorrect - what actually happens is that the snake moves normally on the turn that it eats the food, and then it grows (its tail is cloned) on the next turn of the game.

This bug caused an off-by-one error in the position of enemy snakes on the turn after they ate food. At the same time, we made sure that enemy snake tails would be safe squares to move into as long as the enemy snake had not eaten that turn. This bug also had to be fixed in Mojave - when your snake seems to perform better on your own game board than on the official board, it’s a good sign that there’s something wrong. ;)

A new feature of the official game board this year added a test endpoint that runs a series of smoke tests against a given snake URL. When running these tests against Robo, we noticed that, when the only moves available all lead to death, the unit tests consider death at the hands of an enemy snake a “pass” and death by a wall a “fail.” So we updated our failsafe logic always to prefer snake deaths over wall deaths in that scenario.

Heuristic

The heuristic was the biggest change in Robo’s design between last year and this year.

First, we taught Robo the rules of head-on-head collisions, so that they would be considered as valid moves both from our own play and when predicting the enemy’s behavior. This also meant allowing Robo to move into squares that the enemy is capable of moving into.

But, just knowing that head-on-head collisions are possible means nothing if we’re rarely ever placed into a situation in which they can be used. So the “keep-to-center” logic was removed and replaced by aggression logic that scores the game board higher as we approach a square that the enemy can move into on the next turn. This distinction is important - if we were to target the enemy’s head, an attack would cause us to move into its neck instead.

After some testing, I made Robo even more aggressive by completely ignoring food on the board unless Robo’s health was less than or equal to 40. This number seemed to be a good balance between bullying other snakes and hunting for food during tests under Robo’s win conditions.

Another important change this year is that if Robo predicts its victory, it will continue to evaluate the entire board heuristic rather than giving every victory move the same board score. This means that a move that results in “victory in 3 moves” will be favored over a move that results in “victory in 4 moves." Previously these two situations would have been given the same board score, which means the alpha-beta pruning algorithm would have stopped evaluating other possible victory conditions after assessing the first. This also offers us some protection against situations where we predict the enemy’s move incorrectly.

Challenges

Other Aggressive Snakes Robo’s improved heuristic worked very well against nearly every other snake I threw at it. I’d downloaded many snakes from GitHub for testing, both from the current and the previous year. But then, I discovered B-Snake: https://github.com/mnursey/b-snake B-Snake’s strategy was similar to our own in that it would start playing very aggressively against smaller snakes.

Since Robo didn’t favor food unless hungry, it would perform very poorly against this snake, dying more often than it won. For a while, I thought our goose was cooked, but we were able to make three changes that improved our performance against B-Snake significantly:

- If Robo is smaller than the default starting length (3), consider food on the board as if Robo was hungry. This causes us to grow to 4 as quickly as possible (before becoming aggressive), and gives us an early game advantage over snakes that prioritize anything other than food in the first few moves of the game.

- When being aggressive, instead of weighting every square around the enemy’s head equally, the square that corresponds to their current movement direction was weighted slightly higher. This change resulted in Robo executing diagonal attacks more often, whereas previously it would drag itself alongside the enemy.

- Finally, the outer edge of the gameboard was weighted very unfavorably, a defense mechanism to try and prevent enemy snakes from pinning us up against the wall.

B-Snake came to challenge us on the day of the competition, and I’m happy to report that Robo stood its ground.

API Timeout and Performance Issues

Robo’s performance last year was abysmal, taking nearly one second to calculate its moves. We wanted to figure out what was causing the performance issues and address them, so we ran a Lua profiler through the codebase, and identified/corrected three issues:

- We used a library called inspect.lua for pretty-printing tables. This library is very slow, and we call it a lot when logging. We thought that this was disabled when disabling logging - but even though our log() calls were no-oped, the inspect() calls that got passed into log() were still getting executed, crippling performance.

- We were using a deep copy function to copy the game state table by value instead of by reference so that as we move up and down the minimax tree, we can make alterations to the game state without affecting other branches at the same depth. This function also copied Lua metatables, which we did not make use of but added a lot of overhead.

- This was the big one - if you read last year’s blog, you’ll know that Robo executes a floodfill whenever the board heuristic is evaluated. This is used to determine if moving into some area of the board may cause us (or the enemy) to get trapped, and as a weighting parameter for the overall board score. However, it is a costly operation. We changed the floodfill so that rather than checking the entire board, it only checks double our current length plus the amount of food on the board.

It turned out to be a good thing that we addressed these issues, because unlike last year, Bounty Snakes were not allowed to customize the response time for their win conditions - it was fixed at 200ms by the game server!

Starvation Bug

Here’s one that we still haven’t managed to figure out. Sometimes, Robo decides not to target food at all, and starves itself. This tends to happen more often when a small enemy snake is near the center of the board, food is near the edge of the board, and the enemy snake is chasing its own tail.

Two of Robo’s losses this year were due to snakes that either accidentally or intentionally managed to exploit this bug. If anyone can figure out why it happens, please let us know!

Improved Logging

Erika spent a great deal of time working on an enhanced logging/replay mechanism for Robo. Unfortunately, a last-minute deployment bug prevented us from running it on the day of the tournament.

We hope to have it up and running for next year - Erika has written an excellent blog post on its design which should be up in a day or two - stay tuned!

Production Deployment

While we became less concerned about how many games Robo could play concurrently once the performance issues were addressed, we still felt that we could make improvements here. Son of Robosnake was deployed to a c5.2xlarge instance in the us-west-2 availability zone on Amazon Web Services.

This instance had eight cores, so we set up OpenResty (nginx) to use eight worker processes that listened over unix sockets. On HTTP port 80 a load balancer was configured to round-robin requests over each worker. With this configuration, we felt confident that Robo could handle as many concurrent games as competitors could throw at it.

The Bounty Snake Bracket

This one hurts a little! Sendwithus announced that all bounty snakes would participate in a standard tournament match, with win conditions not revealed until shortly before the tournament began.

Robo wasn't designed for large arenas, low-food conditions, or when playing with multiple snakes on the board. We do have a fail-safe mechanism in place for multiple snakes to keep from crashing (when there’s more than one enemy, select the closest one to us for minimax and completely ignore the rest).

I also added a last-minute tweak that tries to balance food and aggression if there’s 8 or less food on the board. There wasn’t much we could do to practice for this, not knowing what win conditions we’d be playing under and of course not having access to the other bounty snakes’ code.

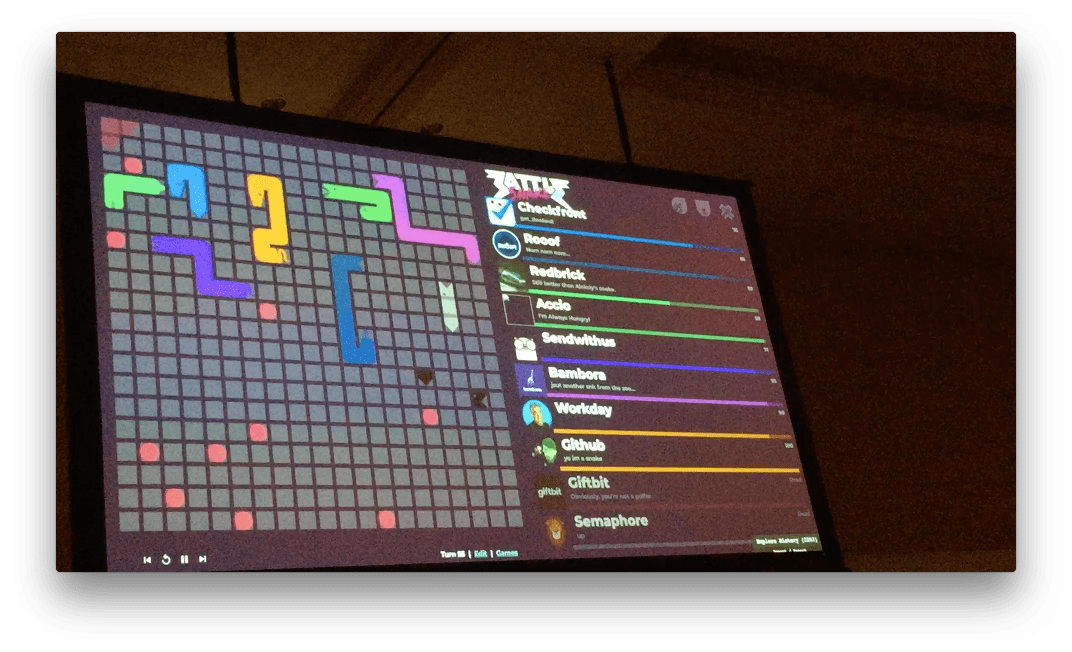

The two exceptions were Checkfront and Rooof which open-sourced their snakes prior to the tournament (Rooof’s has since been taken down), which Robo could both beat in 1-on-1 matches. On the day of, the win conditions were revealed as 10 food (good!) on a 20x20 board (okay). While Robo managed to execute a beautiful kill shot against Giftbit’s snake into the top-left corner, it soon got caught near two other snakes.

Pictured above is what the board looked like two moves before Robo’s death (Robo is the green snake in the top left). Note that Checkfront’s snake (at Robo’s tail) and Sendwithus’ snake (purple snake below Robo) are both at an equal distance from Robo (four squares to each of their heads).

Because they are equal, Checkfront’s snake, which appeared first in the game state data, was selected as the “enemy” for Minimax, while SWU’s snake was ignored. This caused Robo to move down towards the food. SWU’s snake moved towards the food as well (see below).

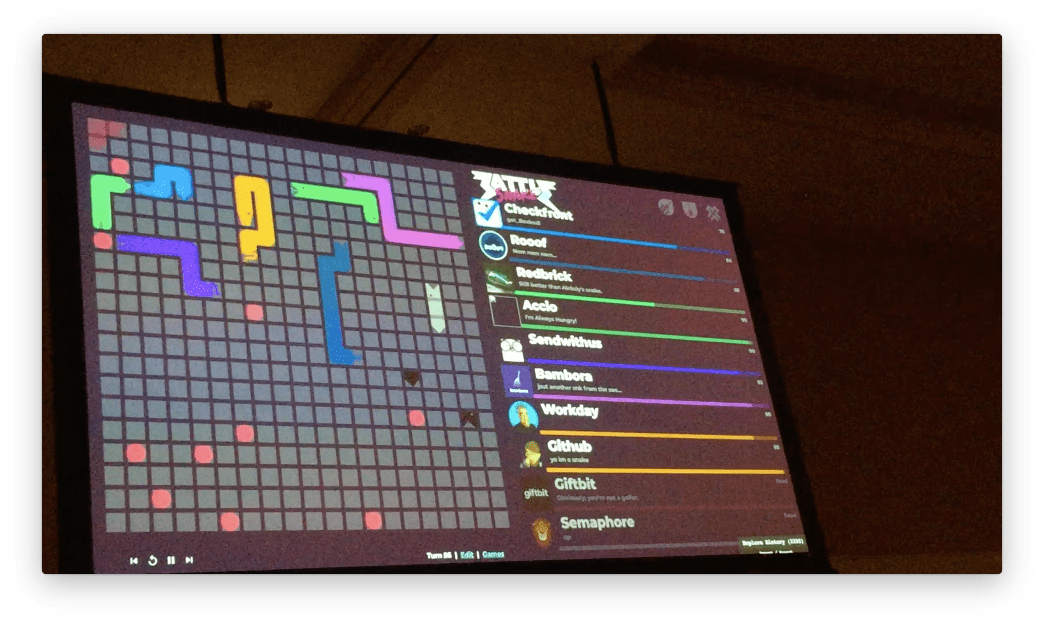

On the next turn (pictured above), as SWU’s snake was now closer to Robo than Checkfront’s snake, it was then selected as the “enemy” for Minimax prediction. The algorithm correctly predicted that both moves available to Robo at this point (eating the food, or moving one square right) had the potential of death by a head-on-head collision, as SWU’s snake was larger than Robo.

A move was randomly chosen (as they both had the same weight), resulting in Robo going down to eat the food. Unfortunately, SWU’s snake was also feeling hungry, and so that was the end of Robo’s time in the bounty snake bracket.

Conclusions

Once again, I found the experience of building the bounty snake to be a lot of fun, and it was a nice change from the kind of programming that we do day-to-day at Redbrick. Offering a copy of Shift as our grand prize bounty gave a much greater incentive for competitors to come and challenge Robo to a duel than last year - Robo played in 74 games this year, versus 45 games last year. Robo’s final tally was 71 wins and 3 losses.

At one point I walked through the main hall where I could hear competitors cursing out “that darn Redbrick snake is too hard!” That made me smile a bit. Robo isn’t impossible to beat - three people managed it, with some of them even competing in the beginners’ bracket!

But it should be difficult enough that pulling off the victory feels like you accomplished something, and I hope that the teams who did beat Robo feel especially proud of their achievements. And while our server is no longer running, the source code is available to browse, or fork at https://github.com/rdbrck/bountysnake2018 . It’s released under an MIT license.

As for next year? I hear machine learning is a big thing now. Maybe we’ll try that. Or maybe not. Either way, we welcome the next generation of challengers (and snakes)!